mHC: Manifold-Constrained Hyper-Connections

Zhenda Xie*, Yixuan Wei, Huanqi Cao, et al.

DeepSeek-AI

arXiv:2512.24880v2 January 2026

Abstract

This paper introduces Manifold-Constrained Hyper-Connections (mHC), a way to make neural network connections more powerful while keeping them stable.

The problem: Hyper-Connections (HC) made networks more capable but also unstable at scale. The solution: Constrain the connection matrices to a special mathematical space (the Birkhoff polytope) that preserves important properties.

Hyper-Connections (HC) extend residual connections by expanding the residual stream width and introducing learnable mixing. However, the unconstrained nature of HC compromises the identity mapping property, causing training instability and limited scalability.

We propose Manifold-Constrained Hyper-Connections (mHC), which projects the residual connection space onto the Birkhoff polytope (doubly stochastic matrices). This:

- Restores the identity mapping property

- Preserves signal means and regularizes norms

- Prevents vanishing/exploding gradients

- Maintains HC's representational capacity

Empirical results demonstrate stable training at scale with only 6.7% overhead for expansion rate n=4.

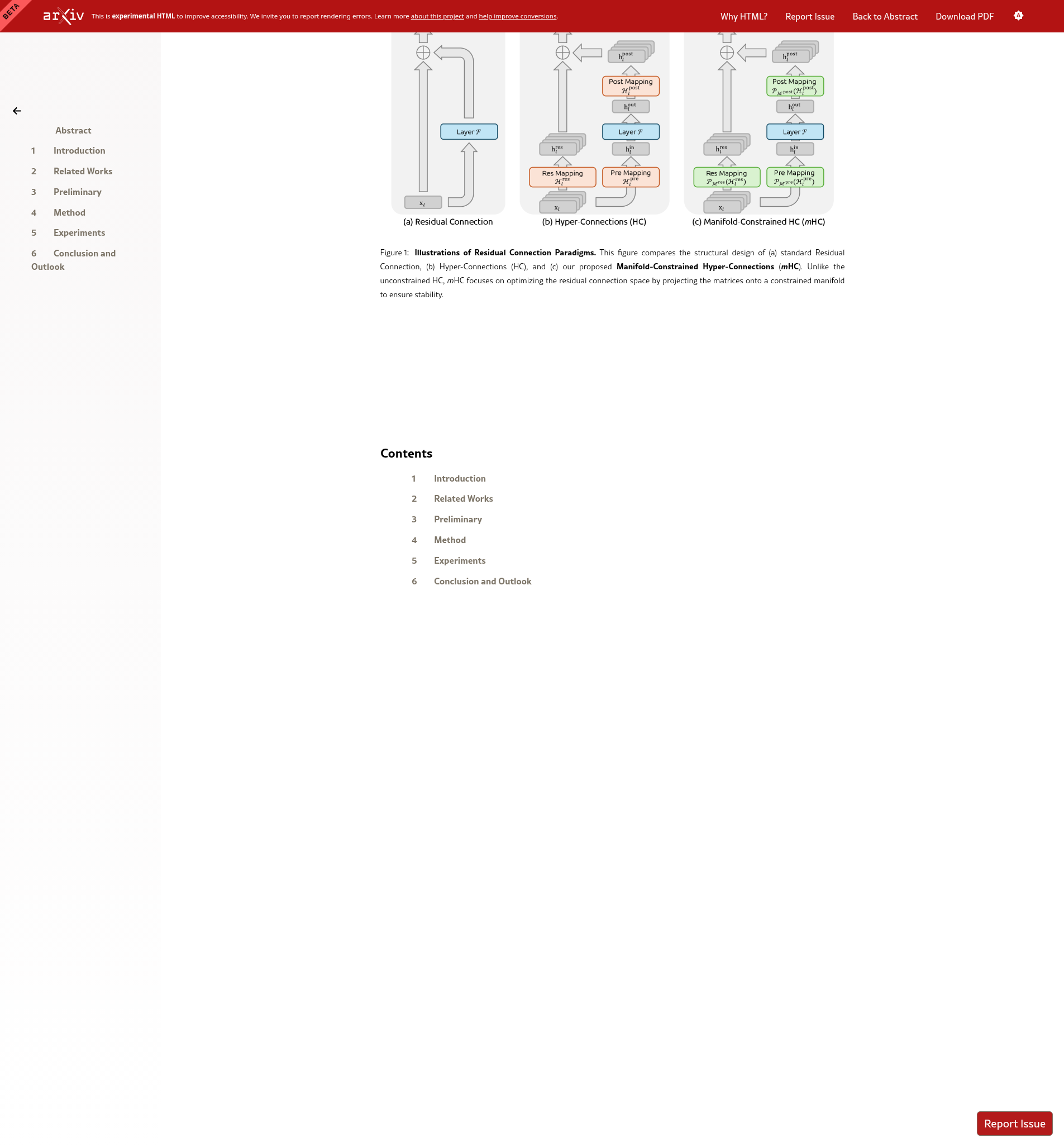

Figure 1: Residual Connection Paradigms

This figure compares (a) standard Residual Connection, (b) Hyper-Connections (HC), and (c) our proposed Manifold-Constrained Hyper-Connections (mHC). Unlike unconstrained HC, mHC projects the residual connection space onto a constrained manifold to ensure stability.

What You're Seeing

- (a) ResNet: Simple skip connection: output = F(x) + x

- (b) HC: Multiple streams with learnable mixing matrices

- (c) mHC: Same as HC but matrices constrained to Birkhoff polytope

Architectural Comparison

ResNet (2015):

Hyper-Connections (HC):

mHC (constrained HC):

where P projects onto the Birkhoff polytope (doubly stochastic matrices)

Introduction

The Story So Far

Remember from Level 3 how ResNet changed everything in 2015? The key was the identity mapping: the ability to pass information unchanged through layers. This allowed training of very deep networks.

Then came Hyper-Connections (2024), which said: "What if we had multiple parallel streams instead of just one skip connection?" This worked great for small models, but...

The Core Issue

The mixing matrices in HC could do anything. They could amplify signals by 1000× or shrink them to almost zero. Over many layers, this compounds:

- Multiply by 2, ten times: 2¹⁰ = 1024 (explosion!)

- Multiply by 0.5, ten times: 0.5¹⁰ = 0.00098 (vanishing!)

mHC's solution: Constrain those matrices so each row and column sums to 1. This means they can mix information, but they can't amplify or attenuate it overall.

Background: The Identity Mapping Property

The success of residual learning stems from the identity mapping property. For ResNet, recursive application yields:

The term x_l preserves unmodified signal from shallow to deep layers, ensuring stable gradients and enabling deep architectures.

HC's Instability

HC extends this to:

The composite mapping Π Hʳᵉˢ is unconstrained. Analysis shows its singular values can diverge exponentially with depth:

- Explosion: σ_max(ΠH) → ∞ as L → ∞

- Vanishing: σ_min(ΠH) → 0 as L → ∞

- Loss of identity: ΠH ≠ I in general

mHC Solution

By projecting Hʳᵉˢ onto the Birkhoff polytope (doubly stochastic matrices), we ensure:

- Row sums = 1, column sums = 1 (mean preservation)

- Non-negative entries (convex combinations)

- Closure under multiplication: doubly stochastic × doubly stochastic = doubly stochastic

- Identity achievable: I is doubly stochastic

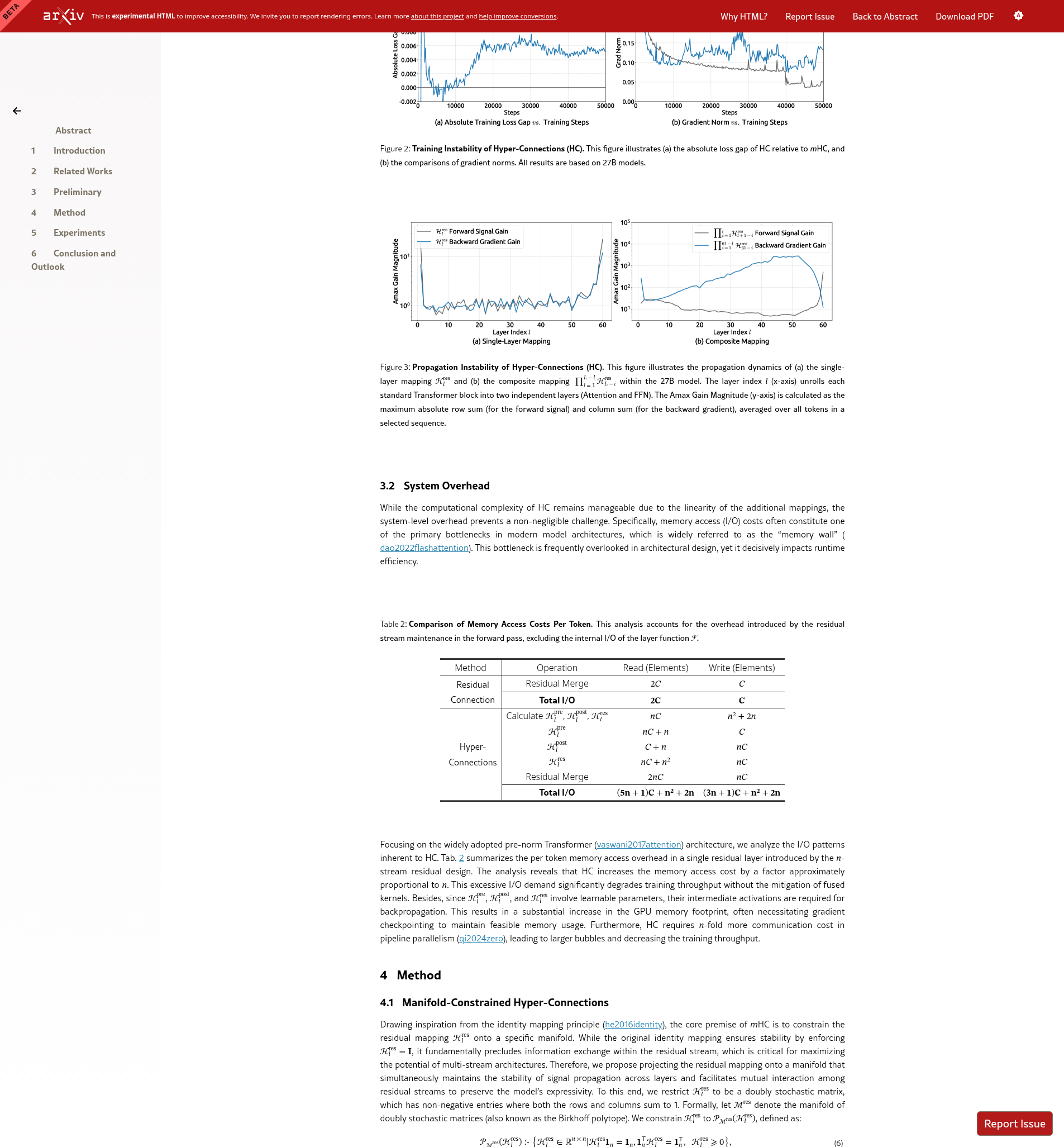

Figure 2: Training Instability of Hyper-Connections

This figure illustrates (a) the absolute loss gap of HC relative to mHC, and (b) the comparisons of gradient norms. All results are based on 27B models.

What This Shows

- Left (Loss Gap): HC's loss suddenly jumps up around step 12k

- Right (Gradient Norms): HC's gradients explode at the same time

- mHC: Stays stable throughout training

These are 27 billion parameter models—the kind used in production AI systems. The instability isn't a theoretical concern; it's a real problem at scale.

Empirical Evidence

Figure 2 demonstrates HC's training instability on large-scale models (27B parameters):

- Panel (a): HC exhibits unexpected loss surge around step 12k, correlating with instability in gradient norms

- Panel (b): Gradient norm divergence confirms the theoretical prediction of unbounded signal amplification

- mHC baseline: Maintains stable loss and gradient profiles throughout training

This validates the theoretical analysis: unconstrained HC compromises training stability at scale, necessitating the manifold constraint.

Key Contributions

- Framework: Manifold-Constrained Hyper-Connections (mHC), a general method for stabilizing HC by projecting onto the Birkhoff polytope

- Theory: Proof that doubly stochastic constraints restore identity mapping property and ensure signal conservation

- Algorithm: Efficient Sinkhorn-Knopp projection with infrastructure optimizations (kernel fusion, mixed precision, selective recomputation)

- Empirical: Demonstrated stable training at scale with 6.7% overhead for n=4

- Understanding: Provides deeper insight into topological architecture design

🎯 Main Results

Why This Matters

mHC enables the best of both worlds: HC's representational power combined with ResNet's training stability. For the first time, researchers can train massive Hyper-Connection models without worrying about instability. This opens the door to deeper, more capable AI systems.

Congratulations!

You've completed the mHC Course

From grade school basics to understanding cutting-edge AI research, you've journeyed through the entire landscape of neural network architecture design. You now understand one of the most advanced papers in deep learning!

What You Can Do Now

- ✅ Read and understand the mHC paper on arXiv

- ✅ Explain residual connections, HC, and mHC to others

- ✅ Understand why manifolds matter for AI stability

- ✅ Follow current research in neural architecture design

- ✅ Pursue deeper study in deep learning and mathematics